A few weeks back I wrote about the difference between configuration and provisioning noting, primarily, that there are differences between the two tasks. It remains an important distinction to make because it’s really where the rubber meets the road (or the app meets the network) where it becomes important. As the infrastructure/network side of the house is where we’re concentrating our efforts to apply DevOps, it’s necessary to dig a bit deeper on this topic.

So today we’ll examine couple of concrete examples of the difference – and why it’s important.

Your application is being deployed and it needs, wonder of wonders, a load balancer. Cause, elasticity. Scale. You know, cloudy type stuffs.

So you grab that golden image and launch that virtual machine with Whatever Your Favorite Load Balancing Thing might be (HA Proxy, Nginx, F5, etc…). Voila! You’re ready to go, right?

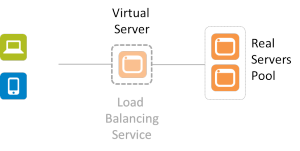

Not by a long shot. You’ve provisioned a load balancing service, yes. But is it configured? Probably not. In addition to the basic networking needed (IP addresses, VLANs, etc…) there are also a whole bunch of very application specific things that must be configured. There’s no “golden” load balancing service that can be generically applied to your application. Period. At a minimum the virtual server (that represents your application to the rest of the world) needs two things: an IP address and a pool of app instances to select from.

That means there’s some configuration that must occur after the provisioning. There’s no single LB image that will work for all applications, period. Even if all other settings are the same (LB algorithm, L7 app routing rules, TCP options, etc..) you still have to tell it which pool of real servers to select from and what IP address to use so clients can connect.

This is true for pretty much all application services (infrastructure) that support an application deployment. Because there’s no single golden image that just “works” for everything and no way to use dynamic configuration options for everything (DHCP for IP addresses is one thing, but there’s no “dynamic load balancing algorithm selection” service out there yet) there remain a set of options that must be configured after provisioning occurs. Consider application security like a web app firewall (WAF). It has to be configured to protect a specific application. It has to learn the URIs and the data expected to be exchanged so it recognizes when some interaction is out of bounds and potentially dangerous. That’s unique to the application, and thus it requires some specific configuration after provisioning.

It’s important to note this distinction lest we get caught up in believing that we can simply create a golden image of all our network infrastructure and services and put them into production with the touch of a button.

This little reality also helps to understand why it doesn’t really matter whether the network service in question is launched on custom hardware or some server off the shelf. The bulk of the work when automating the deployment of network services is in the configuration, not the provisioning.

While you can minimize the amount of post-provisioning configuration necessary, you can’t eliminate it entirely. That’s why API-enabled, programmable infrastructure is a critical piece of the larger DevOps picture. It is the API, programmable templates and similar capabilities that enable the configuration to be automated and orchestrated so as to not be the bottleneck in the deployment process.