Java is known for having an insatiable appetite for resources, requiring strict controls to not exceed budget limits. However, the latest JDK updates and other developments have helped to make Java runtime extremely flexible in terms of memory usage. When configured smartly, Java can be cost-effective for different types of applications—cloud-native or legacy, microservice or monoliths. Today, it’s possible to make Java very slim from the start and scale it up gradually without consuming unnecessary resources.

Here we’ll take a closer look at the factors influencing resource usage inside JVMs, see what tools can help and how processes can be tuned to gain more elasticity and scalability, ultimately reducing TCO.

Scaling Possibilities: Virtualization vs. Containerization

Within JVMs there are several scaling options: vertical, horizontal and a combination of both. To maximize results, it is important to start with a vertical scaling configuration that optimizes RAM and CPU usage inside every instance, based on current load levels. As a next step, these settings will be replicated across all instances while scaling horizontally.

VMs Scaling

While starting to run a project inside a VM, it is important to pick the right size machine, as too small an instance can lead to performance issues or even downtimes during load spikes. However, over-allocating will result in wasted unused resources during normal load or idle periods that still must be paid for. This can be a tough choice.

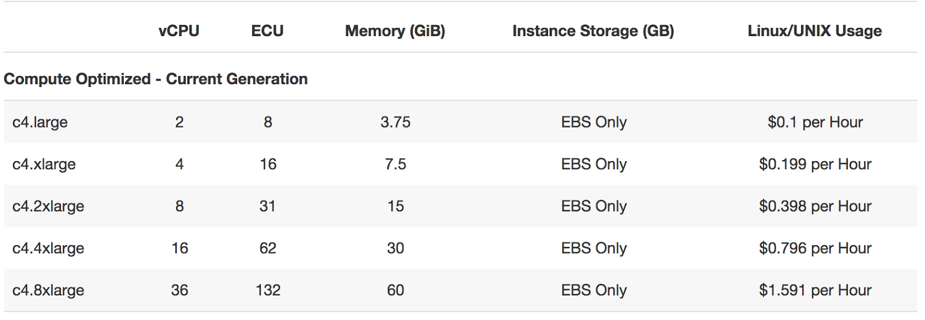

Start with a smaller VM and scale it as the project grows. When just a bit more resources are required, the cloud vendor can offer you to double the size, as this is the most commonly used scaling step (e.g. see AWS offer below).

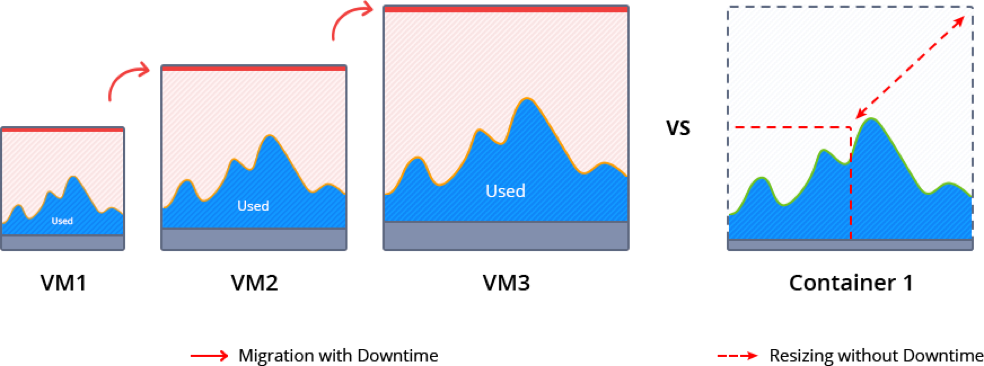

During such scaling, the current VM normally is paused to migrate and redeploy the application. This inevitably causes some downtime and requires manual intervention.

Memory ballooning provides a possible way (although rather complicated) to modify the amount of resources inside VMs on the fly without incurring downtime. However, this process is not fully automated and requires special tools to monitor the memory pressure in the host and guest OS and then activate vertical scaling based on the needs. As a result, memory ballooning doesn’t work well in practice, as the memory sharing should be automatic to present the expected advantages.

Unfortunately, lack of VM elasticity and efficiency in terms of resource usage often leads to overpaying.

Containers Scaling

Containerization presents a new way of application packaging that makes resource consumption more granular and flexible, by automatic resource sharing among containers on the same host using cgroups. Unlike VMs, containers’ boundaries can be easily scaled up and down without the need to pause and reboot running instances.

There are two types of containers: application and system containers. Both use the same scaling approach but they are intended for different purposes. An application container (such as Docker or rkt) typically is more lightweight, runs a single process and is used mainly for creating new projects, as it is easy to build them from pre-existing Docker image templates.

A system container (LXD, OpenVZ) behaves like a full OS and can run full-featured init systems. This type of container is preferable for monolithic and legacy applications, as it can reuse the originally created design and important “old school” settings.

Even system containers are much thinner compared to virtual machines, so scaling up and down takes much less time. And the horizontal scaling process is very granular and smooth, as a container can be easily provisioned from scratch or cloned.

Garbage Collector Choice

To maximize the efficiency of the above mentioned vertical scaling inside a Java-based container, it is important to select the appropriate garbage collector (GC) and tune it according to the project needs.

Let’s review five widely used garbage collector solutions:

- G1: From JDK 9, this modern shrinking garbage collector is enabled by default. One of its main benefits is the ability to compact free memory space without lengthy pause times and uncommit unused heaps. This GC is considered the best production-ready option for vertical scaling.

- Parallel: A default GC in JDK8, it is a good option for low pauses but not for memory shrinking. This makes it inappropriate for flexible vertical scaling.

- Serial, ConcMarkSweep (CMS): Both of these are shrinking garbage collectors with the ability to scale memory usage in JVMs vertically. But in comparison to G1, they require four Full GC cycles to release all unused resources. A new JVM option (-XX:-ShrinkHeapInSteps) in JDK 9 can speed up memory release by bringing down committed RAM right after the first Full GC cycle.

- Shenandoah: Shenandoah is a rising star among garbage collectors which promises to be the best solution for JVM vertical scaling. Its main benefit over the others is the ability to shrink in live mode without necessity to call Full GC, which helps eliminate significant performance degradation.

Let’s compare memory behavior with shrinking G1 and non-shrinking Parallel:

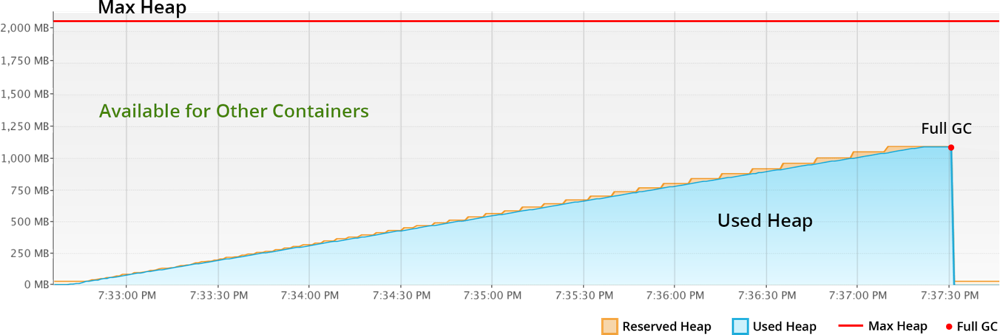

With Parallel, the unused RAM is not released back to OS. JVM keeps it forever, even disregarding the explicit Full GC calls. So even if your application has low RAM consumption, the unused resources cannot be shared with other processes or other containers as it’s fully allocated to the JVM.

The modern shrinking G1 garbage collector significantly improves resource utilization compared to the previous example. The reserved RAM increases slowly according to the real usage growth. And all unused resources within the Max Heap limits are not wasted by standing idle, but made available to other containers or processes running in the same host.

To summarize, Java applications with non-shrinking GC or non-optimal JVM start options hold all allocated RAM. For scaling Java vertically, the garbage collector should provide memory shrinking in runtime. In other words, it has to package all the live objects together, remove garbage objects, uncommit and release unused memory back to the operation system.

Charging Model of Cloud Providers

Nowadays, most of the cloud vendors offer a “pay as you go” billing model. This means that it is possible to start with a smaller machine and then add more servers or migrate to a twice-bigger instance as the project grows. But as described above, usually you reserve more than the application actually consumes to avoid the risk of downtime during the load spikes. As a result, many organizations overpay because the account is charged per VM but not based on the consumed resources inside.

At the same time, there are providers offering “pay as you use” billing approaches that became possible with the help of containerization, resource granularity and automatic vertical scaling. The payment is based on the resource units inside container. And the required capacity is provided and reclaimed on the fly in accordance with the load at the current moment. As a result, you are charged based on actual consumption and are not required to make complex reconfigurations to scale up or down.

Taking into consideration all these factors and implementing the right settings can significantly reduce the costs for Java hosting resources, as well as positively influence application performance as a whole. Cloud and container technologies continue eliminating barriers and offering new opportunities for projects with different architectures and budgets.

About the Author / Ruslan Synytsky

Ruslan Synytsky is CEO and co-founder of Jelastic. He designed the core technology of the multilingual cloud platform that runs thousands of containers in a wide range of data centers worldwide. Ruslan worked on building highly available clustered solutions, as well as enhancements of automatic vertical scaling and horizontal scaling methods for legacy and microservice applications in the cloud. Ruslan is actively involved in various conferences for developers, hosting providers, integrators and enterprises. Follow him on Twitter.

Ruslan Synytsky is CEO and co-founder of Jelastic. He designed the core technology of the multilingual cloud platform that runs thousands of containers in a wide range of data centers worldwide. Ruslan worked on building highly available clustered solutions, as well as enhancements of automatic vertical scaling and horizontal scaling methods for legacy and microservice applications in the cloud. Ruslan is actively involved in various conferences for developers, hosting providers, integrators and enterprises. Follow him on Twitter.