It only takes a headline-grabbing mass internet outage or two to drive home the financial and brand incentives of keeping an application up at all times. That’s why in today’s DevOps organizations, incident response is a common topic of conversation.

There’s a lot of talk within the DevOps community about organizational processes and designs for effective incident response such as the Incident Command model, as discussed by our friends from Fastly in this talk. We also frequently discuss technologies and tools for incident response, spanning monitoring, alerting, logging, mitigation and many other focus areas. At NS1, we make use of the Incident Command model and have invested in the usual array of technologies for incident response, along with developing many of our own.

Even after investing significant time considering how to respond to an incident and the budget they allocate for related technologies, it’s still possible for organizations to fail to respond effectively when a real incident arises. As a provider of mission-critical DNS and traffic management services for many of the largest internet properties, we know there’s little room for failure. We’ve learned that augmenting our processes and technologies with three simple practices makes a huge difference in real-world incident response situations.

The Value of a Good Checklist

A good checklist is a favorite tool of mine when it comes to effective incident response. A checklist is a simple, concise, easy-to-understand tool to help an operator recall key steps to take in a particular situation. In an intense incident response scenario, it can be easy for an operator to skip or forget steps in their response. Rather than being overly prescriptive, a checklist’s job is meant to help an experienced professional who is familiar with the systems they’re operating make the best use of their knowledge in a high-pressure situation.

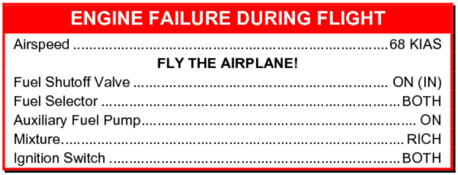

A checklist that I have really come to appreciate doesn’t have anything to do with DevOps or networked systems operations. It is the Cessna 172S Emergency Procedure for engine failure during flight:

In his book, “The Checklist Manifesto,” Atul Gawande discusses this checklist in depth. This example demonstrates some of the key principles behind a good checklist: it’s simple and short, not overly specific or prescriptive; it’s limited to only the most important actions the operator needs to recall during an incident mitigation; and, because of its simplicity and clarity, it helps the operator calmly execute those key actions.

This Cessna checklist offers another great lesson for operators of today’s IT infrastructure. As Gawande writes, “Because pilots sometimes become so desperate trying to restart their engine, so crushed by the cognitive overload of thinking through what could have gone wrong, they forget this most basic task: FLY THE AIRPLANE.” In IT operations, it’s no different—we can sometimes become so distracted by root cause analysis and the desire to know why an incident has occurred that we forget to focus on mitigation first—analysis can come later, once the incident is resolved.

Running Drills

It’s possible to have well-vetted checklists, great tools and technologies and the best organizational models, but they all can be forgotten in an instant of panic if people aren’t used to using them. So, how do we get great at using them all in real-world situations? Through practice.

Any incident response strategy needs to incorporate fire drills because they ensure we regularly exercise our processes, tools and checklists in simulated scenarios that approximate real-world incident response situations. There are a number of ways to create fire drills with respect to networked systems. One tool we often discuss in the technical operations community is one built by Netflix called Chaos Monkey, a software embodiment of what has become an emerging discipline: chaos engineering. It’s a methodology for improving the resilience of a distributed system by creating failure situations—for example, by randomly “breaking” virtual servers in a distributed application to force failure handling systems to engage. This is a kind of “automated” fire drill that drives resilient architecture.

A “war game” that simulates DDoS attack situations is another drill we have benefited from. We build tools similar to those used by sophisticated attackers and regularly exercise our DDoS mitigation response by literally attacking production systems. In this type of fire drill, we split our team into attackers and defenders (similar to the red/blue team approaches used by information security organizations for penetration testing). We’ve learned that running good DDoS war games requires us to attack real systems with real attack techniques, which helps us understand the dynamics of our distributed systems and builds muscle memory in our team around key steps needed to respond to real-world incidents. We’ve also found that fire drills such as these are great tools for team-building; they’re almost like running football or soccer scrimmages at the end of a long practice session, helping everyone on the team understand how they can best contribute and what “position” they should play in a real-world event.

Analyzing After the Fact

Postmortem or retroactive analysis is a final practice for driving effective incident response. After any incident, real or simulated, a thorough review of the incident and the response is critical. Asking and carefully answering some simple questions about the response to the incident is the key: What happened and why? What worked well in our response? How could we have responded more effectively?

The reason to do this type of analysis is to apply learnings from the incident and create a feedback loop, so that in the future, your team’s response to incidents in general—and certainly to similar incidents—is more effective. Obviously, it’s critical to fix any issues that surface during an incident response in your systems, incident response processes and mitigation tools. You should also review relevant checklists after an incident: what was missed, or what was confusing? And finally, feed the outputs of real or simulated incidents into your fire drills so you can practice similar scenarios in the future.

Be Prepared

Like death and taxes, incidents are going to occur. Companies that deliver content or applications in networked systems need to be prepared for them. Best practices such as creating a simple checklist and conducting drills helps a team’s incident responses improve over time, helping to reduce stress during an actual incident and increasing the likelihood of a swift resolution.

About the Author / Kris Beevers

Kris Beevers is founder and CEO of NS1. He is a recognized authority on DNS and global application delivery, and often speaks and writes about building and deploying high performance, at scale, globally distributed internet infrastructure. He holds a PhD in Computer Science from RPI. Prior to founding and leading NS1, he built CDN, cloud, bare metal and other infrastructure products at Voxel, which was acquired by Internap in 2011.

Kris Beevers is founder and CEO of NS1. He is a recognized authority on DNS and global application delivery, and often speaks and writes about building and deploying high performance, at scale, globally distributed internet infrastructure. He holds a PhD in Computer Science from RPI. Prior to founding and leading NS1, he built CDN, cloud, bare metal and other infrastructure products at Voxel, which was acquired by Internap in 2011.