Over the years, application performance management (APM) tools have been utilized typically to measure and monitor the performance of software such as web applications. They do so by tracking such things as request response times and error messages, thus providing relevant data to be analyzed by the DevOps team, which then can make the appropriate changes to optimize their software. But what about the effectiveness of the data workflows that contribute to the functionality in many of the applications out there today? How can performance monitoring toolsets be extended to provide these workflows with opportunities for continuous improvement?

Data Pipelines and Performance Challenges

Below, I will discuss the use of APM tools in the context of DataOps. I will delve into the metrics and insights an APM tool must provide to engineers so they can support data pipelines efficiently, showing how the effective use of data-centric APM tools can provide extensive value to a DataOps team and their organization.

Data Pipelines and DataOps Engineers

A successful DataOps implementation requires creating automated data workflows that analyze large amounts of data quickly, while providing high-quality analysis as an end product. It’s easy to see how the job of a DataOps engineer can be a complicated one. It is their job to build and manage these pipelines to help the business achieve certain goals that rely upon the resulting data analysis. In other words, DataOps engineers are responsible for making sure high-quality data is made available as quickly as possible.

Consider a common scenario with which we are all familiar: online shopping. With each purchase you make from a particular e-commerce website, the system typically provides recommended products for you to buy. This is often the result of a data workflow that produces data analysis for a recommendation system, which is then leveraged by the application to provide the end user with these recommendations. This pipeline is likely to be made up of several processes.

These processes include those for loading large sets of raw data into a distributed file system for storing data across a cluster. This would be followed by several processes that need to run on this cluster to format and analyze this data to produce the quality data for the recommendation engine being leveraged by the web application. A lot is being done with large sets of data. And, as is usually the case with so much happening, there is also much that can go wrong.

The Challenges in Managing Data Pipelines

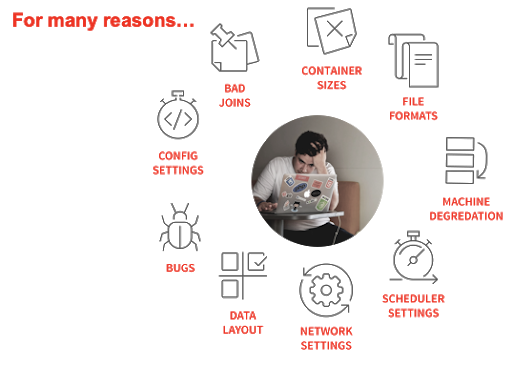

There are several major performance challenges that come with the management of the types of pipelines mentioned above. Below are a few of the common threats to data pipeline performance:

- Scalability: One of the more obvious challenges when dealing with the construction of a data pipeline is scalability—in other words, the effectiveness and efficiency of the pipeline when it needs to deal with a much larger dataset. It’s likely when the jobs were written to deal with such data transformation and analysis that they were tested against smaller sets of data in development environments. What happens when this is no longer the case? Maybe now instead of processing a relatively small amount of data in a test environment, it needs to process much more as it runs in production. Will the processes still perform adequately to deliver quality analysis in a time-efficient manner?

- Application Failures: Data pipelines rely upon parallel processing. But what happens when you experience the failure of one or more of these parallel-running processes that are executing to clean and analyze the data? This can throw a wrench in your ability to relay quality, complete analysis to the business unit. Being able to resolve failures quickly and get the pipeline back up and running plays a huge role in the success of a particular data workflow.

- Fragmentation: Most data pipelines consist of a variety of tools that handle different tasks (such as ingestion, storage, processing, analysis, etc.). With so many different tools in the mix, pipelines can become fragmented. Tool incompatibility issues can arise and data formats can vary. It’s important to be able to unify data within the pipeline, even as it moves between disparate tools.

APM Tools to the Rescue

Manual vs. Automated

Enabling DataOps within your organization requires continuous feedback and continuous improvement to your data workflows. A good place to implement these strategies is with the use of APM tools to help manage the performance of these pipelines.

Consider the processes for improvement that occur without the use of APM tools. It would be on the IT and DataOps folks to locate performance-related problems manually within these pipelines and begin digging through log files to find the cause. A significant amount of time likely would be added to resolving these failures due to the lack of a centralized location to view this information, the lack of automated alerts to notify the team to these performance snafus and the lack of assistance in deriving insights from collected performance data. APM tools can provide an intuitive UI for debugging such issues and can provide the type of insights needed to detect and resolve these problems quickly.

That being said, monitoring for big data processes is quite different from other types of application monitoring. Certain APM software will be better-equipped for dealing with data pipeline-related challenges than others. Much of this depends on the ability of the APM tool to integrate with the platforms and frameworks used within the data workflow, as well as the metrics the performance management software collects and utilizes to help the DataOps engineers refine their processes.

What Types of Metrics Can Help Determine the Causes of Issues Within a Data Pipeline?

Earlier, I discussed data pipeline performance challenges stemming from scalability issues and process failures. Let’s discuss what types of metrics should be collected to resolve issues like these in a data workflow.

Before delving into the metrics, it’s important to note that having full-stack data is essential. By full-stack data, I mean not just infrastructure metrics (which are the most obvious type of metric folks tend to think about when managing data pipelines), but also other data points such as user data, application data and resource data. All this data needs to be unified and correlated across all systems in the pipeline to manage it effectively.

Consider scalability, for instance. The APM solution you are using must be able to track several key metrics to help identify scaling-related bottlenecks within the data pipeline. These include:

- The Amount of Data Being Processed: How can we identify a scalability issue without knowing how much data is being processed by a particular run? The answer: We can’t. Being able to quantify the amount of data being handled by each iteration of the data pipeline is the first step in detecting if the workload is the problem.

- The Length of Each Run for Each Individual Process: The APM software being used by an organization must be able to integrate with the structure of the data pipeline to report the length of each run for each individual job running within the workflow. If a particular job was completed in one minute when processing X amount of data but took 20 minutes when processing 10X, then we have diagnosed a potential scalability issue and narrowed it down to the root cause in a time-efficient manner. Maybe the fix is as simple as tuning some SQL, and the scalability issue can be rectified in relatively little time.

- Resource Utilization: Certain processes may require more resources than others to complete in a timely fashion. The ability of the APM tool to track the amount of resources being utilized by each process is key in determining if simply reallocating certain resources can fix problems with performance.

Example issues:

Unravel is an example of an APM tool that can track all the above while organizing the data within a user interface that provides DataOps engineers with just about all they need to diagnose data workflow performance issues from one screen. In addition, Unravel employs AI solutions to help take the metrics they collect and provide the engineers with specific insights as to how they can optimize their pipeline. This helps decrease the amount of time DataOps personnel spend on managing existing processes, allowing them to spend more time creating new processes to improve the quality of analysis being provided to the business.

Conclusion

APM tools are no longer solely for ensuring application availability. APM tools for big data, such as that from Unravel, have allowed for performance monitoring and analysis within data workflows, helping to ensure the availability of crucial, high-quality data analysis. Any organization looking to improve the quality of their data analysis processes should be looking to extend their APM toolset to include those for big data.

This sponsored article was written on behalf of Unravel.