So, your business needs to invest in data analytics technology to improve efficiencies, competitive advantage or business outcomes. There are a ton of things to consider, but underlying each decision are three driving factors:

- Performance – The need to meet service level agreements.

- Growth – The need to accommodate success, as well as deal with inevitable industry changes.

- Budget – The need to do both of the above while keeping costs low enough to not erode the profit gain from analytics.

These three forces underpin nearly every technology selection decision, but what frequently isn’t noticed is how much these three drivers interact.

1. Concurrency and Growth

Test both for current levels of concurrency and the level you hope to achieve in the next few years as the organization grows.

When evaluating benchmarks between vendors, consider that benchmarks are designed to make that company’s software look good. For example, if they are good at concurrency, you will see various levels of concurrency represented in the benchmark. If the benchmark shows only one workload at a time, or maybe ten, you can safely assume that concurrency is probably not something that technology is good at. Similarly, if the benchmark is done at 10 TB scale, the vendor probably isn’t that good at high-scale analytics.

When bringing vendors in for a proof-of-concept, one mistake I’ve seen (more times than I can count) is testing the capabilities of the software with only one user at a time. People do not line up politely to use analytics one at a time.

In addition to current levels of concurrency, over time, hopefully, the organization will grow, and the number of both internal analytics users and external customers will increase. Testing for the number of users you have now may not uncover problems that will invariably reveal themselves when a lot of people hit the system at once. The last thing your organization needs is to turn away customers because they’re overloading your systems.

2. Performance & Price

Evaluate price/performance, not performance alone.

Everyone understands the importance of performance. Not one user has ever gone to a data architect or engineer and requested for their analytics to “please execute more slowly.” But performance isn’t simply how fast the analytical software can process queries, how fast the data pipelines can move data or how fast the response is between data input and reaction output. There’s also a monetary aspect of performance to bear in mind.

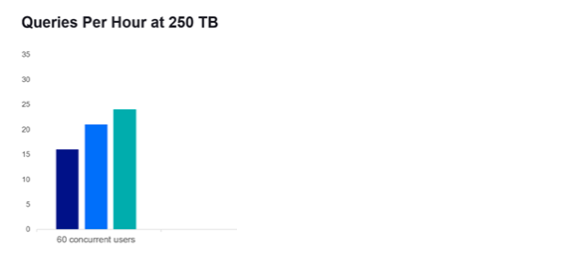

In a recent benchmark, three data management and analytics stacks were compared with 60 simulated concurrent users/workloads. The difference in queries per hour on 250 TB of data was minor, perhaps 16 queries per hour for the slowest and 23 queries per hour for the fastest.

(Mcknight Benchmark – SaaS Data Analytics Platform Comparison – vendor names removed)

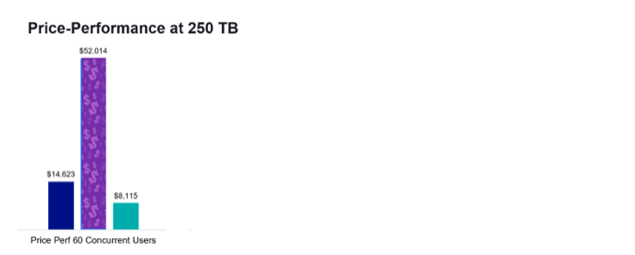

It looks like performance is just not that big a consideration for this technology. But there is no such thing as an unlimited budget. Let’s look at how much it would cost to get this level of performance out of each stack.

(Mcknight Benchmark – SaaS Data Analytics Platform Comparison – vendor names removed)

Price-performance is a far more useful metric of value to the organization. How much the software can do is irrelevant if the budget limits how much the business can afford to do with it.

3. Software Efficiency

Consider how much efficiency and control you’re willing to trade for ease-of-use.

When you analyze data in the cloud, you’re not just paying for software, you’re also paying for hardware, a.k.a. cloud compute instances. Bundling the two costs in one bill makes things easier. But it also means that cloud providers make more money the more instances, or compute nodes, you use. You trade ease-of-use in billing for a higher price. And they no longer have a financial incentive to provide efficient software.

Similarly, to make a cloud-based analytics software scale up in performance and concurrency with zero effort on your part, the software has to use only defaults for all settings internally. It can scale only by throwing more compute at the problem; compute that you’re paying for every time you use it. You’re trading control of the software–tuning, scaling guidelines, shut down conditions, etc.–for ease-of-use, again for a higher price.

Throwing more compute at a problem isn’t always the best solution. Recently, I saw a POC where the customer implementation included 278 EC2 nodes on Amazon cloud. The organization didn’t initiate the POC because of inefficiency. They were having performance problems, even with that much compute power. A competing product was able to run on only nine EC2 nodes with the same workload and better performance. The company was paying 25 times as much for the compute hardware because it was convenient.

Be aware of the tradeoffs.

4. Affordable Scalability

Paying for only what you use can become impractical when you use more and more.

The volume of data that is being created, consumed and captured is growing exponentially. By 2025, it is estimated that nearly 30% of data being generated will be real-time. Instead of pulling data in batches, data will be pulled in continuously. Queries will be responded to in seconds, not hours, or in milliseconds, not seconds.

Going to the cloud can make tremendous sense when a company or a workload in that company is new. But studies show that as businesses scale and growth slows, the pressure the cloud puts on business margins eventually outweighs its benefits. This is why most enterprises today are considering repatriating some or all of their workloads off the cloud.

5. Flexibility for Change

Make certain your stack will continue to work even as conditions change, and you won’t have to rebuild from scratch to do the same thing in a different location.

When you’re building a long-term architectural strategy, you want to ensure you cover as many bases as possible because, the fact is, you really don’t know what the future holds. Ten years ago, maybe you had a Hadoop on-premises strategy or a data warehouse appliance. Today, you may have a cloud-focused strategy. Tomorrow, maybe you’ll move some or all of those workloads back on-premises due to performance, security, costs, regulations or other reasons.

This is why you want flexibility that doesn’t mandate a “cloud-only” or an “on-premises-only” or a “this-cloud-only” architecture and which doesn’t integrate with only the same vendors’ software. You want an architecture that can work equally well both on the cloud as well as on-premises, hybrid or multi-cloud, providing the flexibility of going where business needs are best met.

Containerization offers possibly the highest level of flexibility in deployment, but it can also add to deployment complexity, so again, be aware of what you’re trading. If the software you choose is platform-agnostic—not tied to any particular location, ecosystem or deployment model—then that will make future choices a lot more flexible.

Regardless of what analytics you choose, what you decide to build or what use cases you have to meet, striving for balance in performance, growth and budget will create a strong data architecture, now and for the future:

- The analytics architecture is high-performance: it should meet SLAs, get things done on time and meet or exceed expectations and requirements.

- The analytics architecture is resilient to growth and change; even if there is rapid growth or sudden or extreme changes, it’s future-ready.

- The analytics architecture should control costs and live within an allocated budget.

Keep these guidelines in mind when choosing an analytics provider. The decisions you make today will have direct ramifications on the growth, profitability and success of the business tomorrow.