Agile makes development deliver code faster. CI makes testing move faster and CD makes delivery faster, but what keeps track of the volume of change all of this introduces and delivers optimizations faster?

That is the question Opsani wants you to be asking. And it’s a valid question. Optimization in a complex and constantly changing software world becomes more important–if it can keep up. Opsani thinks it can, with a little AIOps to help anyway.

The premise is simple. There are ways to adjust the environment that might increase performance and/or reduce costs. Those ways are individually relatively simple (such as JVM settings or cloud instance sizing), but combined they make a complex web that impacts performance as a whole, with individual bits sometimes helping and sometimes hurting.

Up JVM memory on a large instance and it might help. Switch to a smaller instance later, and that JVM change may cause problems on the smaller footprint, for example. So something more dynamic is needed. As code changes, best optimization options might change also. As the other parts of the environment change, that magnifies the concern that applications are not running at their best and might be costing too much money.

Opsani’s answer to this problem is continuous optimization. Using AIOps to check different combinations of settings at a rate that operations staff couldn’t manage even if they did have the time. And they generally don’t.

From my (not their) perspective, there are two pieces to this puzzle. First is gathering inputs and determining performance, second is tweaking settings. Then repeat. Seems simple but the environment continuous optimization would work in is increasingly complex. Any look at how many places optimization can occur in a given environment–from VM/Instance to code to network to management platform to storage/database choices–there is a lot going on, and all of these settings can impact each other.

Hence the idea of continuous optimization appeals to me. They’ve got the interfaces that one would expect to gather inputs, names such as Jenkins, DataDog and New Relic. They’ve got the interfaces to enact and test out changes too, with interfaces to products such as Kubernetes, Terraform, Rancher and Wavefront. So it certainly appears they’ve got the basics covered. Of course there are vendor interfaces I’d like to see that aren’t in the list of integrations, and no doubt the same is true for you. But the core is here, getting to the monitoring and management tools most companies use regularly.

Opsani seems to be aware of the different comfort levels of various organizations, also. Able to run in dev or test as well as production. Since every organization has a different taste for putting new ideas into production, this is a good idea, though personally I would aim for eventual production rollout, just because that is where the rubber meets the road. Having it running as part of normal operations means even that small change that reduces responsiveness of your application by 25% (we’ve all seen it) will be detected, and Opsani can try changing some of the environment to compensate.

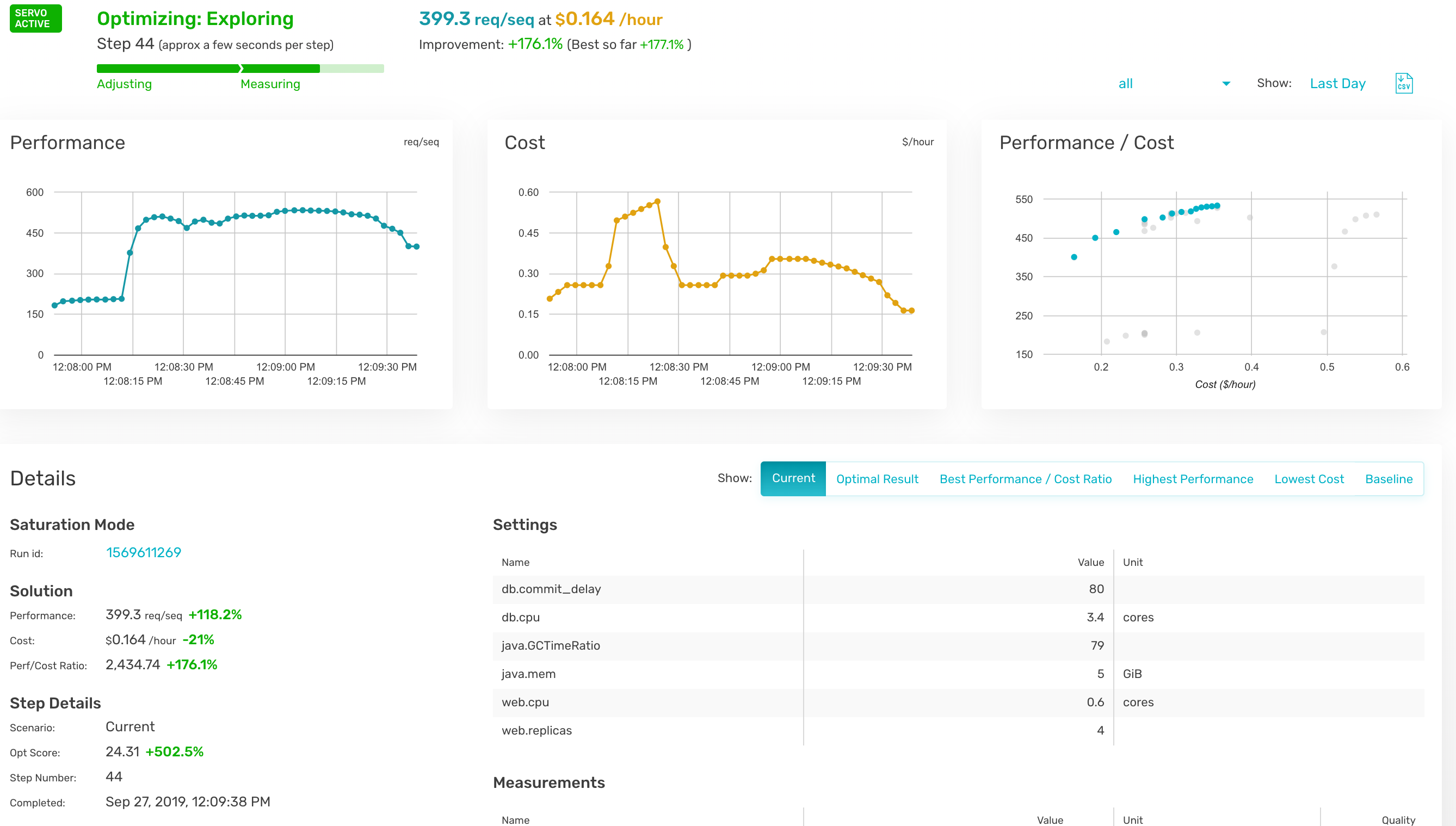

When I spoke with them, we did not discuss cost, but we did discuss savings. For budget-sensitive environments, it is worth looking into the cost in your organization versus the potential savings in actual monthly dollars. Here is a screenshot showing the cost measure combined with the performance measure. Reducing monthly overhead to pay for a tool that also improves performance might just be a no-brainer–if it suits your environment.

I can’t say if it suits your environment, nor can any other analyst/pundit. That’s for your organization to decide. But the idea of continuous optimization is a good one. Opsani is not alone in working on this problem, so even if this particular tool doesn’t suit your needs, you can look for others that do. I recommend getting the demo and seeing what they’ve got, and how it might help in your environment.