New Relic and Mona, a provider of a platform for monitoring the models used to provide artificial intelligence (AI), announced today they have formed an alliance to help bridge the divide between DevOps teams and data scientists.

Most data science teams today build models based on machine learning algorithms using operations platforms that enable them to manage the life cycle of those models, otherwise known as MLOps. Mona provides a set of monitoring tools that are employed to detect drift that can occur as some of the underlying assumptions that were made when building the model are discovered to be less accurate or when new data sources become available.

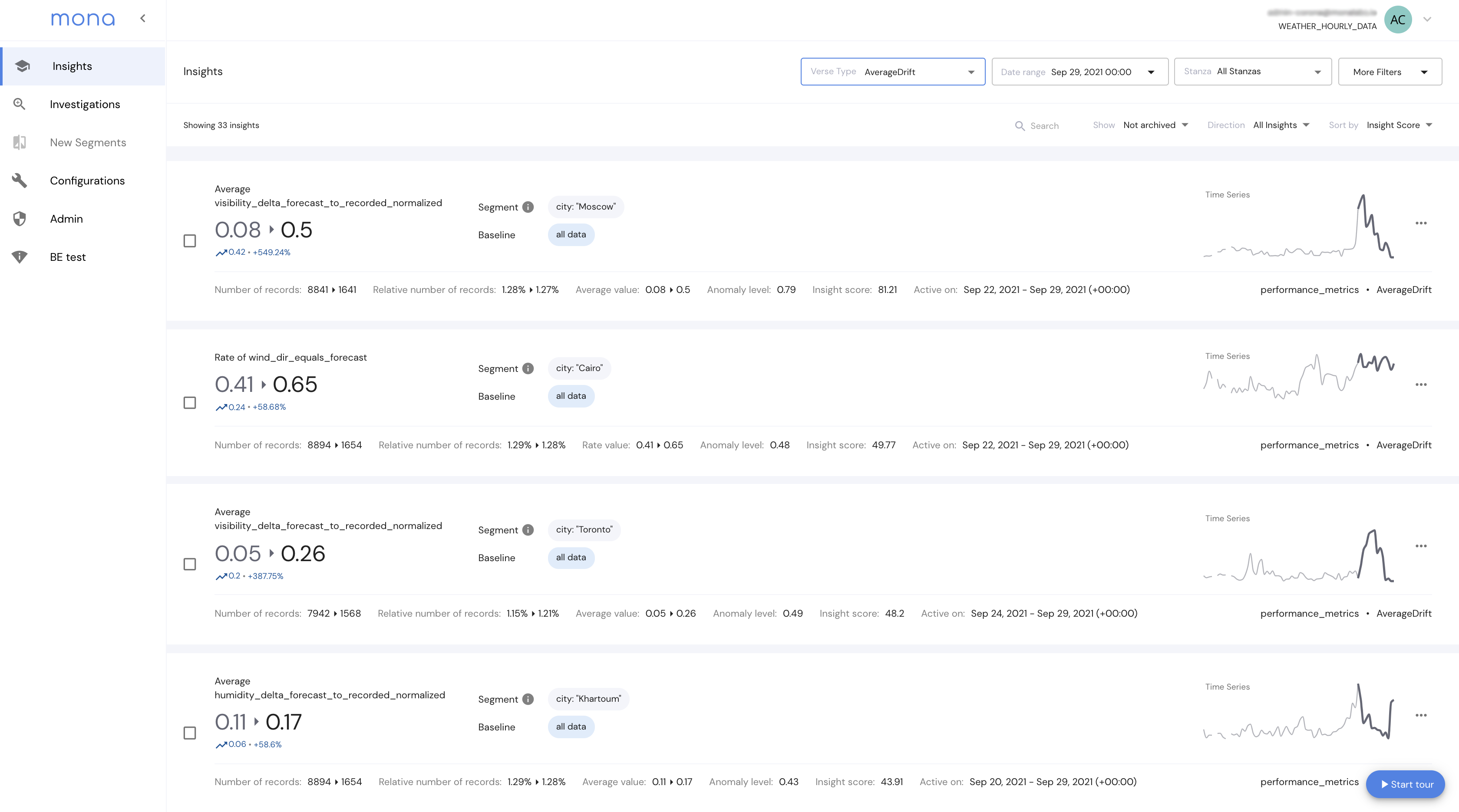

New Relic, meanwhile, provides an observability platform that is widely employed by DevOps teams to monitor and observe an IT environment. IT teams that have access to New Relic One can now also view dashboards provided by Mona that detect anomalies involving data integrity issues and model performance issues.

The integration also enables users to create custom Mona alerts that are shared via the New Relic Alerts and Applied Intelligence service. That capability reduced the volume of alerts generated by correlating anomaly detection to specific IT events.

Yotam Oren, CEO of Mona, said that level of integration provides a bridge between DevOps and data science teams that typically have widely divergent cultures.

Incorporating AI models within applications is the next major DevOps challenge. There is almost no application being built today that won’t incorporate some level of AI. The challenge is AI models are built by data scientists that employ a wide range of machine learning operations (MLOps) platforms to construct those models. The pace at which those AI models are developed, deployed and updated varies widely. However, all organizations will eventually need to confront the problem of how to insert AI models within applications both before and after they are deployed in a production environment.

There are two schools of thought about how best to achieve that goal. The first is to assume an AI model is just another type of software artifact that can be managed as part of an existing DevOps process. In that context, there may be no need for a separate MLOps platform. Conversely, proponents of MLOps contend AI models are built using pipelines created by data engineering teams working in collaboration with data scientists. Developers only become part of the process when an AI model needs to be inserted into an application environment. AI models may ultimately need to be incorporated within an application, but the proponents of MLOps platforms maintain building models requires a unique platform to manage a software artifact that is built by training algorithms.

It’s only a matter of time before compliance issues force some form of convergence between DevOps and MLOps teams, noted Oren. The longer it takes to address both the cultural and technical issues that are required to meld DevOps and data science teams, the more challenging it will become as both teams are set in their respective ways.