NGINX this week announced it has developed a common framework for managing application delivery that can be applied across both monolithic and microservices-based applications.

Announced at the NGINX Conf 2018 conference, the NGINX Application Platform has been rearchitected to provide traditional load balancing capabilities and a web application firewall (WAF), as well as a built-in service mesh for managing microservices and an integrated application programming interface (API) management platform.

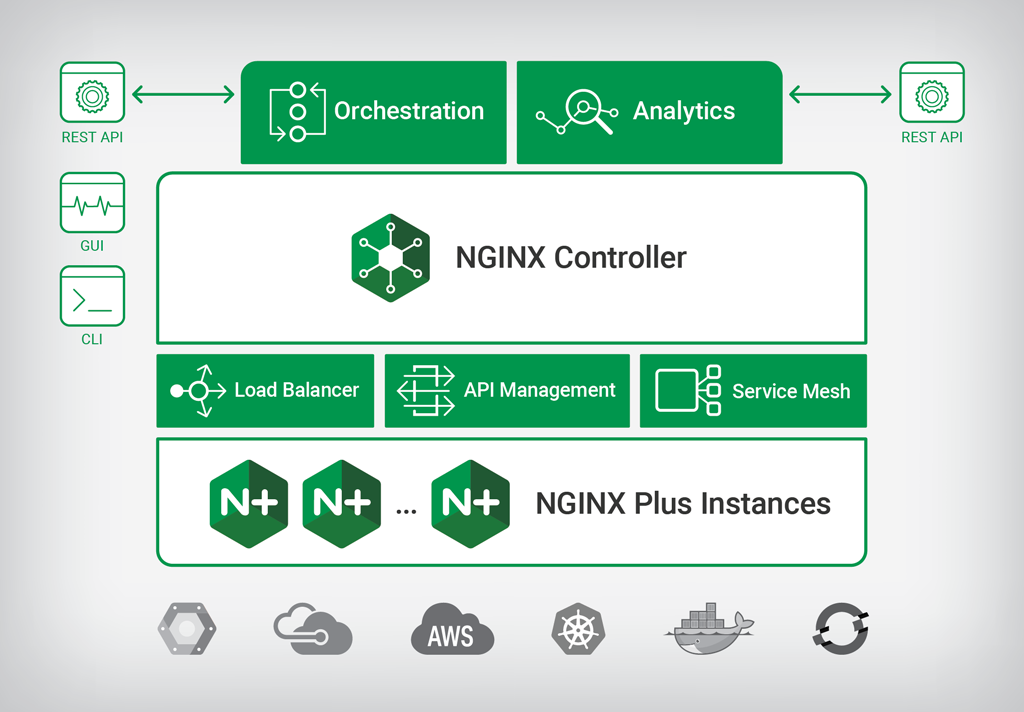

Sidney Rabsatt, vice president of product management at NGINX, said an update to NGINX Controller is at the heart of a much more comprehensive to approach to integrating load balancing, service mesh technologies and API management. Due out later this year, NGINX Controller 2.0 is based on a modular architecture that enables additional functions to be added via plug-in modules. The module for API management is slated for release in the fourth quarter, while the service mesh module is scheduled to become available in the first half of 2019.

Rabsatt said it’s become clear IT organizations don’t want to replicate existing application delivery controllers just to manage microservices. There will never be such a thing as an enterprise IT environment this is made up entirely of microservices. The latest release of the NGINX Controller makes it possible to manage application delivery across the broad spectrum of applications that need to be addressed within modern IT environments, he said, noting that in effect, it’s a one-size-fits-all approach to managing application delivery via a single fabric.

As part of that effort, NGINX this week also revealed that NGINX Plus R16, an enterprise-grade version of the core NGINX load balancing software, has been updated to apply load balancing algorithms to microservices running on Kubernetes clusters, in addition to support for Amazon Web Services (AWS) PrivateLink integration.

Finally, NGINX announced version 1.4 of Unit, an open source web and application server that support TLS encryption, dynamic reconfiguration via an API and experimental support for JavaScript with Node.js to extend existing support for Go, Perl, PHP, Python and Ruby languages support. Full support for JavaScript and Java is planned for 2019.

In general, Rabsatt said it’s clear organizations that have embraced DevOps are the most advanced when it comes to the need to unify management of application delivery. In fact, much of the recent success NGINX has enjoyed as of late starts with developers downloading an open source instance of NGINX to embed within their applications. As those instances of NGINX start to proliferate across the enterprise, more IT operations teams become exposed to the rest of the NGINX platform.

It may take a while for microservices-based applications to achieve enough critical mass to force organizations to rethink their approach to application delivery. But it’s now a matter of time before organizations need to decide the degree to which they want to deploy standalone application delivery controllers and service meshes for microservices applications instead of pursuing a more hybrid approach. NGINX is clearly betting on the latter, given the number of legacy monolithic applications that will be running for years.