Dev Manager Vadim Madison published a chronicle of his team’s move from a monolithic application to a microservices model. In the process, the tools and methodologies they used for continuous integration/continuous delivery (CI/CD) had to be expanded and even changed out in several stages. It’s a long read, but with the time, as there are not a lot of people documenting the case of full-on conversion.

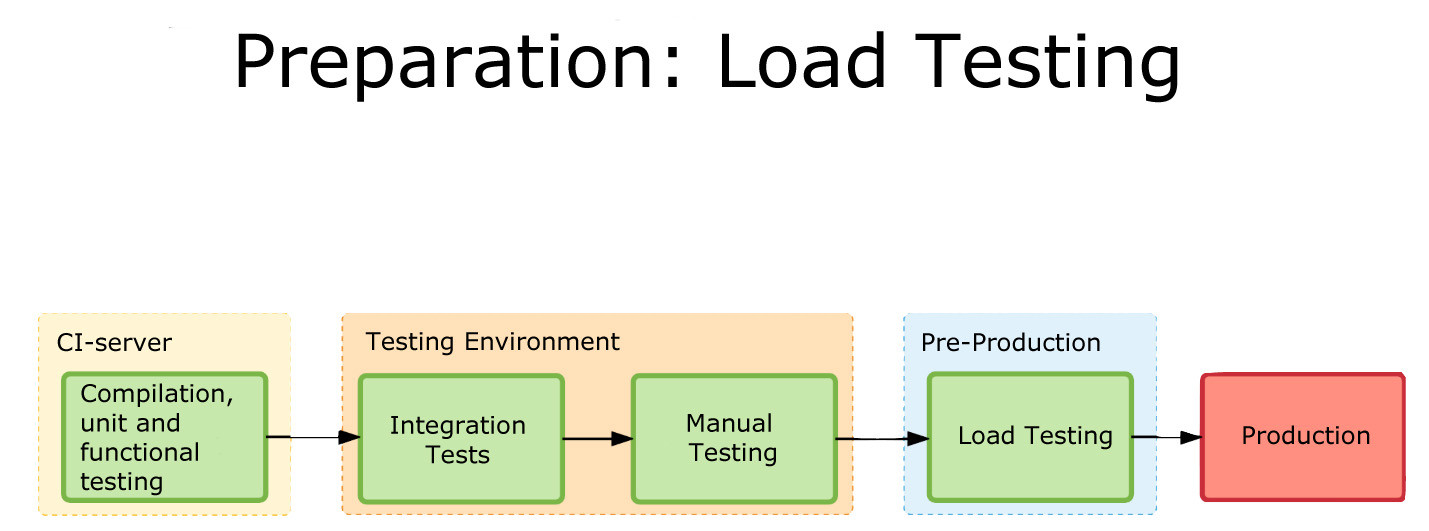

One of the more interesting pieces of his work is the software infrastructure they have to build up to cover CI/CD/monitoring of the application. This is a lot more complex than the original application was, and it all must be managed. One of the benefits of DevOps is to make management of these tools easier, and he does talk a bit about scripting it out and implementing APIs to take problem instances out of the pool and determine what went wrong so they can go through the Dev/CI/CD cycle again.

What makes it interesting is where the team found limitations and switched tools midstream. This being a process, Madison at the end alluded to the fact that they have started eliminating TeamCity (or at least limiting what they’re using it for). I think that when most people begin to go high-rate DevOps, or even constant monitored low-rate DevOps, some of the items his team ran into escape their notice until they find an issue. The architectural growth will occur—you are automating more, building more, monitoring more and releasing more. The question is, how far ahead do you look? Until recently, the answer was pretty much, “Not far,” because we didn’t have cases like Madison’s to draw on and see parallels with what we were doing. But that is starting to change.

CI/CD/ARA vendors can help, of course. Collectively they have a lot of knowledge about the lay of the land, and how people are using the tools to tackle the needs of new architectures. But more public documentation such as Madison’s would be great, too.

Even though you’re moving at a much faster pace, looking ahead and increasing the steps taken in the CI/CD/ARA space can provide that added bit of resiliency, and monitoring at a level appropriate to your project’s maturity can add that extra bit of “we know, we can recover.” There have been some spectacular failures that were indicated by monitoring, but the volume of data flowing through monitoring was enough to mask it. Today, the best solution is to monitor at the appropriate level and then feed that monitoring back into the system.

But you’ll be supporting more software infrastructure. The growth of tools is pretty inevitable: As you spread the functionality across machines/instances/containers, you will need to stretch CI/CD/ARA tools to multiple interrelated targets and monitor the overall system and its performance bottlenecks. And for now, the tools chosen will not always be widely known, so a process to bring new Dev/DevOps team members quickly up to speed on the more obscure tools should be on your list of to-dos.