The use of APIs and microservices is increasing—a recent study from F5 estimated that the industry is approaching 200 million total APIs. As organizations expand their use of APIs and microservices, they inevitably require some form of service management architecture. There are primarily two major design options for implementing this: An API management system or a service mesh.

The key distinction between API management and service mesh can be framed using the four cardinal directions. API management is typically adopted for REST services that face external developers—referred to as north-south traffic. On the other hand, service mesh is more commonly used by internal suites of microservices to help control lateral, or east-west, communication. There are more nuances between each, as we’ll cover below, but this is essentially how they’re adopted in practice.

However, this binary is becoming blurred as collections of enterprise digital applications expand and access to cross-company business domains begins to look and feel more like consuming a third-party SaaS. Furthermore, some suggest that with the right controls, service mesh can cater to external users in the same way API management does. What’s more, service mesh and API management are complementary—they can coexist within the same organization and even be deployed in the same projects.

I recently chatted with Mark Cheshire, director of product at Red Hat, about the emergence of both API management and service mesh. According to Cheshire, both approaches accomplished similar goals but evolved very differently. Below, we’ll revisit why these two paradigms emerged and compare and contrast them. In a separate article, we’ll consider when it’s best to integrate them both.

What is API Management?

API management is most often associated with north-south traffic. By exposing your API to the outside world, you enable other developers to connect it to their systems and leverage your data and functionality like building blocks.

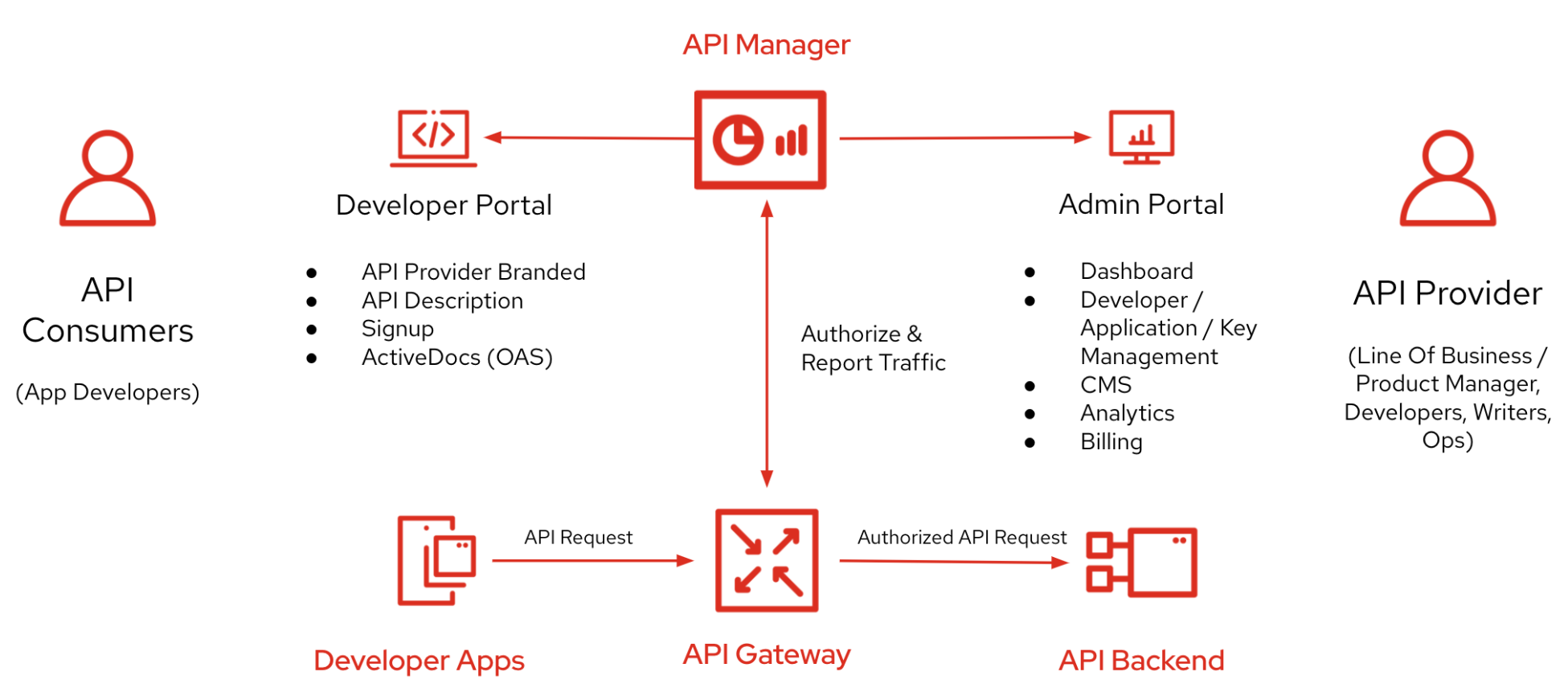

API management arose in response to the rise of web APIs. API management is a broad concept encompassing many functions required to expose an API endpoint to the outside world. This can include authentication, authorization, request routing, documentation, rate limiting, monitoring, logging, monetization and life cycle management. A key goal is to make integration easier for the developer consumer. Architecturally, API management follows a gateway pattern. Consumers issue requests to the gateway which then performs routing to the underlying services.

For APIs, the big catalyst was the emergence of Web 2.0, Cheshire explained. Numerous startups began consuming RESTful APIs to connect their web properties and partner ecosystems. Companies also found REST APIs to be a much more straightforward integration approach than SOAP and SOA. They also saw the ease-of-use benefits and adopted API-first designs within their internal infrastructure.

As of 2021, over 70% of APIs are built for internal use. So, do all these services need API management? It depends. “If you communicate externally, you need API management,” explained Cheshire. But, it’s a grey area for services within an enterprise, he says, though inter-domain traffic often could benefit from API management.

Strengths

- Can enable north-south traffic

- Protects the boundary of an application

- Designed to ease external developer consumption

- Potential for monetization, subscription plans

- Typically plug-and-play; easier to support

- Many solutions on the market

- Developer experience and docs

- Analytics and reporting

Weaknesses

- May be heavier than service mesh

- Fewer open source options; vendor lock-in

- Analysis paralysis in comparing solutions

What is Service Mesh?

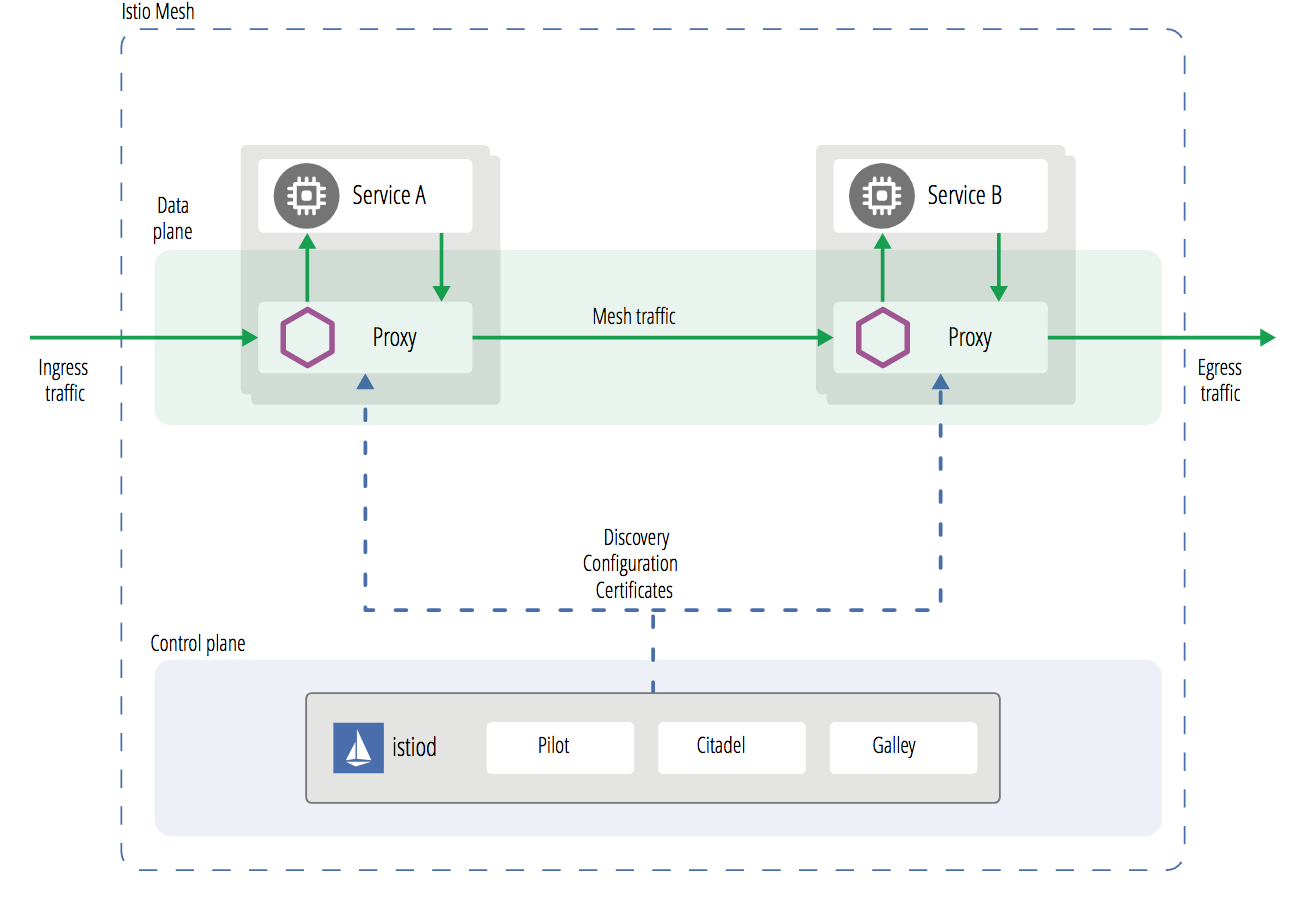

Service mesh is most often associated with controlling east-west communication between internal services. Service meshes like Linkerd, Istio and Kuma make it easier to reimplement similar logic—such as networking, observability, traffic management, security policies and routing—across many microservices from a single control plane.

Service mesh was born to address slightly different challenges than API management. “The transition from monolithic to microservices is all about east-west traffic,” Cheshire said. Companies found it slower to innovate monolithic applications and thus broke them up into microservices that communicate via APIs. But these microservices all needed similar operational capabilities, which would be cumbersome to repeat over and over for each service.

“For one monolithic app blown into microservices, you may not even need a service mesh,” said Cheshire. “However, when you have hundreds or thousands, that’s when applying service mesh becomes critical.”

Architecturally, service mesh is typically divided into a data plane and a control plane. The service mesh deploys a sidecar proxy that sits alongside each individual service. Istio, for example, utilizes the Envoy proxy. Other meshes like Linkerd use their own proxy.

Strengths

- Fits the microservices architecture paradigm well

- Good for traffic routing; improving resiliency

- NIST-recommended architecture for achieving zero-trust

- Many open source options

- Fits large microservices deployments

Weaknesses

- Requires a more hands-on approach

- Potentially more maintenance to support

- Unnecessary for a single service

- Requires filter chain add-ons for north-south abilities

Comparing API Management and Service Mesh

API management is predominantly aligned with externalizing APIs to partners. When you do so, maintaining the contract with a consumer is more important to meet SLAs. Thus, API management commonly delivers life cycle management abilities, such as versioning. API management is necessary even if you are exposing a single API, as this service needs to be adequately documented and secured. That being said, these tenants can also be relevant to developers reusing software within an organization—there’s something to be said for adopting a product mindset when dealing with internal APIs.

On the other hand, service mesh is more aligned with controlling internal networks. Microservices are rarely deployed alone; typically, multiple microservices make up an application. And, many more might exist throughout an organization for unique purposes. Service mesh thus helps maintain consistency throughout large networks of decoupled microservices. Service mesh is also a rapidly growing field, experiencing an explosion of new development and production use. A Cloud Native Computing Foundation (CNCF) report found that service mesh use rose in production by 50% in 2020 alone.

To put the comparison another way, William Morgan, CEO, Buoyant described how API management is more about business logic, whereas service mesh handles intra-cluster operations:

“API gateways typically handle ingress concerns, not intra-cluster concerns, and often deal with a certain amount of business logic, e.g. ‘User X is only allowed to make 30 requests a day’. By contrast, a service mesh like Linkerd is focused on operational concerns (e.g. instrument these calls and report their success rate; secure this communication between these pods) and purposefully avoids the mixing of business and operational logic; and focuses on communication between the services on the cluster.”

Though service mesh and API management achieve slightly different aims, both layers make it easier to reuse web-based services. And, they certainly can be used in conjunction. For example, you could build API-first microservices with service mesh and simultaneously use API management to expose certain domains. In another article, we’ll consider when it’s best to integrate the two technologies.